Data centers are undergoing significant transformations driven by rapid developments in AI, the need for greater efficiency, and the demands of a constantly evolving digital landscape. Why now?

- AI workloads are pushing capacity to the brink. McKinsey predicts global data center capacity could triple to 219 GW by 2030, while Goldman Sachs expects a 165% increase to 122 GW by the same year.

- IDC stated global AI spending will more than double by 2028, expected to reach $32 billion.

- According to the DOE, U.S., data centers may account for 6.7–12% of all electricity usage by 2028, up from 4.4% in 2023.

- Northern Virginia, the world’s largest data center hub, is nearing grid saturation.

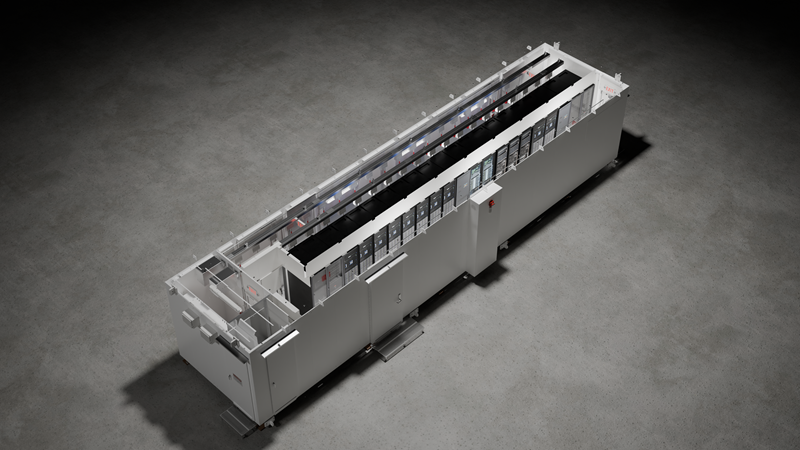

Aside from power, efficiency and capacity concerns, AI infrastructure is rapidly shifting its focus from model training to real-time inference. For clarification, AI learning (training) involves processing large datasets to develop and refine models, while AI inference uses these trained models to make real-time predictions or decisions based on new data. Unlike training, inference requires high-efficiency, high-density environments close to end users, making modular data centers an ideal solution for this next phase of AI-driven demand. So, Why is Inference the next big thing?

- AI Compute Demand: Running trained models to generate predictions is a major driver of AI compute demand, requiring significant processing power. Unlike AI training, which is computationally intense but often performed less frequently, inference happens constantly, especially in real-time applications like search engines, chatbots, recommendation systems, and autonomous driving. As AI applications grow, the need for efficient and scalable infrastructure to support inference workloads becomes critical.

- Energy Efficiency: Inference workloads require high-density compute resources, which in turn demand advanced cooling and energy management solutions to maintain efficiency and sustainability.

As inference becomes the dominant workload, modular infrastructure is poised to deliver the speed, scalability, and proximity modern applications require. The Benefits of Incorporating Modular Data Centers into inference models include:

- Scalability: Rapid deployment and scaling to meet AI compute demands.

- Efficiency: Optimized energy usage and cooling, reducing power consumption and environmental impact.

- Flexibility: Adaptable to various environments, ideal for edge inference and large-scale expansions.

- Sustainability: Lower carbon emissions and water usage through renewable energy and efficient cooling.

- Cost-Effectiveness: Faster deployment and lower capex, making them financially attractive for AI expansion.

CDM is redefining the future of AI-ready modular data center infrastructure. From edge deployments to AI inference hubs, we are designing and building the backbone of tomorrow’s intelligent world.