AI infrastructure planning has moved beyond the edge versus core debate. For hyperscalers, neocloud providers, and enterprise IT teams, the more useful question is: which workload belongs where, and what physical infrastructure pattern is required to support it?

Inference, training, fine-tuning, retrieval, and agentic workflows do not behave the same way. They have different latency expectations, data locality requirements, network paths, power profiles, density needs, security boundaries, and operational constraints. When an AI agent performs multi step reasoning, every millisecond of network “tax” is multiplied, turning a minor delay into a design bottleneck.

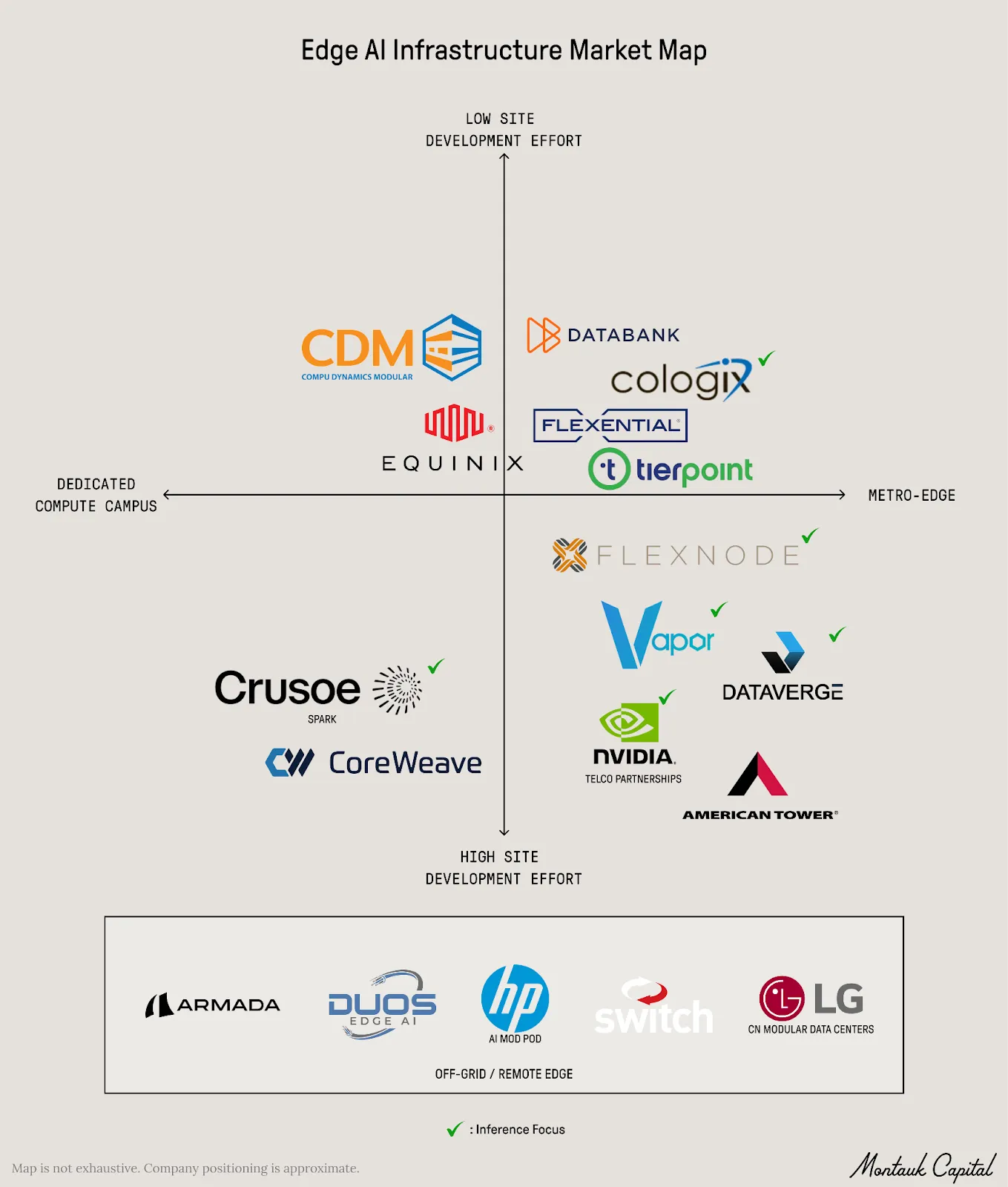

A recent AI infrastructure market map report from Montauk Capital framed this well through the lens of inference, noting how latency can compound across repeated model calls. For physical AI, industrial automation, robotics, financial systems, and other real-time environments, that delay becomes more than a performance issue. It becomes a design constraint.

A recent AI infrastructure market map report from Montauk Capital framed this well through the lens of inference, noting how latency can compound across repeated model calls. For physical AI, industrial automation, robotics, financial systems, and other real-time environments, that delay becomes more than a performance issue. It becomes a design constraint.

But that does not mean everything moves to the edge. It means infrastructure has to be placed with more intent.

Some inference workloads need to sit closer to users, machines, enterprise data, or sovereign environments. Learning and training environments may need higher-density infrastructure built around accelerated compute, power architecture, and thermal readiness. Campus-scale AI factories may need to be deployed where power, land, and network connectivity come together. The goal is to lower the site-development burden for dedicated AI campuses, so teams can focus more on the compute and less on the concrete.

Each environment has a different job to do. So each one needs a different deployment pattern.

That is where modular data center infrastructure becomes more than prefabrication. At its best, modular design gives teams a way to standardize what should be repeatable while still configuring the environment around the workload, the site, the power path, and the network topology. It also provides a more adaptable foundation as hardware requirements continue to shift.

CDM is in these conversations every day with customers planning AI infrastructure across distributed inference, learning and training environments, and campus-scale AI factory deployments. Those conversations have shaped how we think about modular design: repeatable where speed and consistency matter, configurable where the workload and site demand it.

Our architecture is developed with the application in mind, not the other way around. The goal is not to force every AI workload into the same modular box. The goal is to match the right infrastructure pattern to the right workload while reducing fragmented handoffs between trades, contractors, vendors, integrators, commissioning teams, and service providers.

Because in AI infrastructure, speed is not just about how fast something can be built. It is also about how much complexity can be removed between the initial design intent and the moment the system is ready to support real workloads.

Edge, core, and AI factories are not competing destinations. They are interconnected layers of the same enterprise IT architecture.

If your team is planning its next AI infrastructure project, CDM is ready to be part of the conversation, helping align modular strategy with the specific reality of your workload, your site, and your deployment goals.